The interactive device you can operate with your mouth

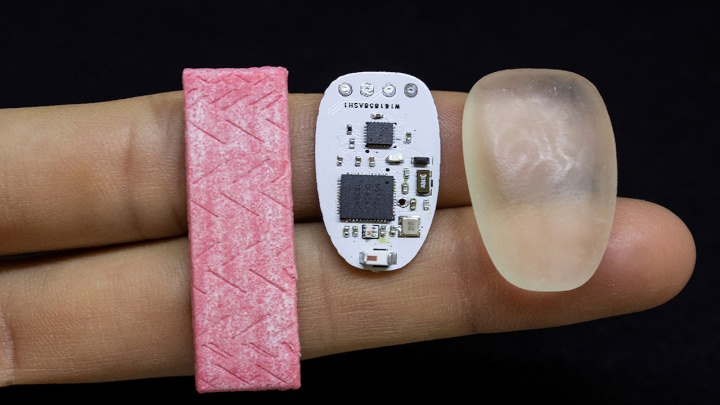

Imagine a device that feels

like and is no bigger than a piece of chewing gum, which

allows you to answer the phone simply by biting down on

it.

We now have the technology with ChewIt, a novel

interface developed by the Augmented Human Lab team at the

Auckland Bioengineering Institute (ABI), University of

Auckland.

It’s a tiny piece of technology encased in a flexible custom-made PCB that you pop in your mouth and which allows for discreet and hands-free interaction with your phone, your computer, your smartwatch and so on.

ChewIt was developed by the team lead by Associate Professor Suranga Nanayakkara who made international headlines in recent years with the FingerReader, a prototype device worn on the finger that allows users to point at words (those on the spine of the book, or in a restaurant menu) which is then translated to voice.

Since moving from Singapore to New Zealand last year, Nanayakkara and his team have produced a number of innovative technologies. Along with ChewIt they have also developed GymSoles, a pressure sensitive insole that vibrates, giving users vibrotactile feedback to help them maintain the correct body posture.

GymSoles was tested and shown to be effective on people performing certain exercise such as squats and dead-lifts, to help them maintain the correct Centre of Pressure, but they could be used to improve posture in myriad contexts.

Nanayakkara has an almost philosophical approach to technology. He wants to address what he says is a mismatch between what technology has to offer and innate human behavior, and his research focused on developing technologies that are more responsive to innate human behavior rather than oblige humans to adjust to the requirements of the technology.

“We want to design and develop systems that can understand the user rather than us having to tell the technology what to do every time – technologies that can understand us much better than technology currently does.”

He defines such technologies as ‘assistive augmentation’. “It’s when the system understands the abilities, behavior and emotions of the user. And when the system is unobtrusive and integrated with our body or our behavior.

“And it should be about

strengthening and extending the physical and sensorial

abilities of the user, allowing them to do what they

couldn’t do before. When you meet all three measures,

that’s assisted augmentation.”

ends

RNZ: Parts Of Power System Could Be Out For 36 Hours In Event Of Extreme Solar Storm

RNZ: Parts Of Power System Could Be Out For 36 Hours In Event Of Extreme Solar Storm NZAS: New Zealand Association Of Scientists Awards Celebrate The Achievements Of Scientists And Our Science System

NZAS: New Zealand Association Of Scientists Awards Celebrate The Achievements Of Scientists And Our Science System Stats NZ: Retail Spending Flat In The September 2024 Quarter

Stats NZ: Retail Spending Flat In The September 2024 Quarter Antarctica New Zealand: International Team Launch Second Attempt To Drill Deep For Antarctic Climate Clues

Antarctica New Zealand: International Team Launch Second Attempt To Drill Deep For Antarctic Climate Clues Vegetables New Zealand: Asparagus Season In Full Flight: Get It While You Still Can

Vegetables New Zealand: Asparagus Season In Full Flight: Get It While You Still Can  Bill Bennett: Download Weekly - How would NZ telecoms cope with another cyclone

Bill Bennett: Download Weekly - How would NZ telecoms cope with another cyclone